|

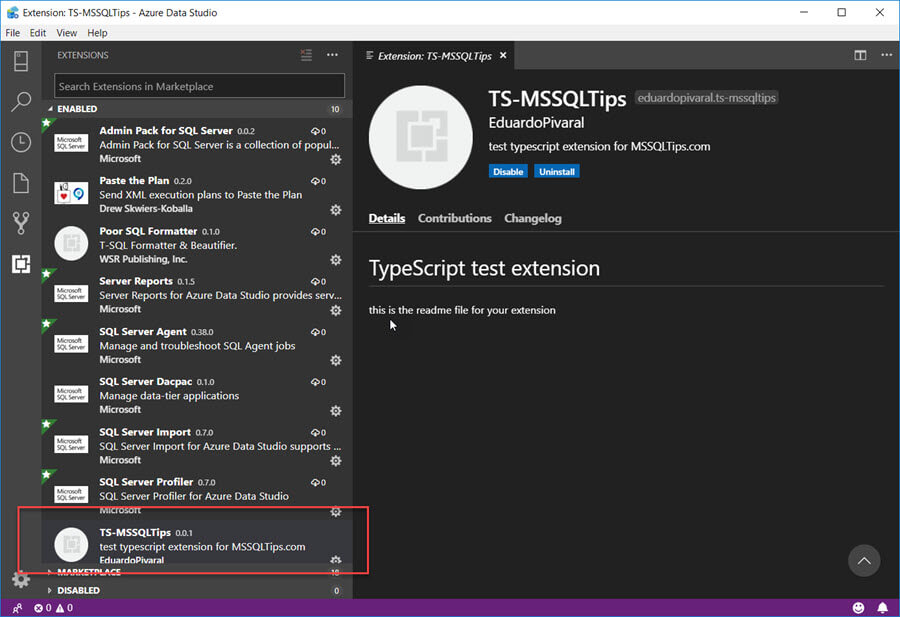

So they are set as the partitions for our 60 months (Instead of setting it to 5 years, meaning there is one RangeStart and OneRangeEnd, you get RangeStart for Month one, RangeEnd for Month 1, RangeStart for Month2, RangeEnd for Month2 etc, breaking your 5 years down into much smaller partitions to work with, Testing Your Incremental Refresh They are set via your incremental refresh policy. I want to see all my data in Serviceĭont worry, in Service RangeStart and RangeEnd don’t keep the dates specified for the filters in Desktop. You might be thinking at this point, but I dont want the filters that I have set for Desktop to be applied in Service. Publish the new Power BI Report and Data Flow.This will come through to your model as updated and you can filter out all the isDeleted records You need to deal with this slightly differentlyĪdd isDeletedColumn and update LastUpdatetime and isdeleted to 1 in the warehouse Is there a possibility that records are also deleted?.Then you will need to make the decision to have a reporting database layer, Where you can add UpdateDate logic to your table Are you running straight from source into Power BI and there is no Update Date available?.If you want to use Detect Data changes you must have an Update date on your source data. No records are deleted so we don’t need to worry about this The months data will only be refreshed if the ImportDate for this record is changed (Or there are new records) Detect Data Changesĭetect Data Changes has been used. However as a just in case 1 month has been used, in case for any reason the job is suspended or doesn’t run. If this was running every single day then you would only need to refresh rows in the last 1 day. its set to months so the partitions are smaller Refresh Rows In the Above example we are storing everything for 5 years. Go to your first table and choose incremental refreshĮxample screen shot of an Incremental refresh policy Store Rows Order date, Received Date etcĬlose and Apply Define your Incremental Refresh policy in Power BI Desktop Filter the data in the ModelĪdd your parameters to every table in your data set that requires incremental loadįind your static date. for example, a year, two years worth of data. allow yourself a good slice of the data to work with. the recommendation is to set Incremental processing up over a relational data store.įor the desktop. Its possible to query fold over a Sharepoint list. Its not recommended to run incremental processing on data sources that cant query fold (flat files, web feeds) You do get a warning message if you cant fold the query Query Folding – RangeStart and RangeEnd will be pushed to the source system. Range Start and Range End are set in the background when you run power BI. The two parameters that need setting up for incremental loading are RangeStart, RangeEnd Go to transform data to get to the power Query Editor (You can either be in desktop or creating a dataflow in Service) Set up incremental Refresh in Power Query Editor. Can you define the Static Date within the table that will be used for the Incremental refresh?Įach of these points are very important and will establish what you need to do to set up the incremental refresh, from your data source up to power BI Desktop and Service.Which tables in your data set need incremental refresh?.How many years worth of data do you want to retain?.If rows can be updated, how far back does this go?.Are new rows simply added to the dataset in power BI?.

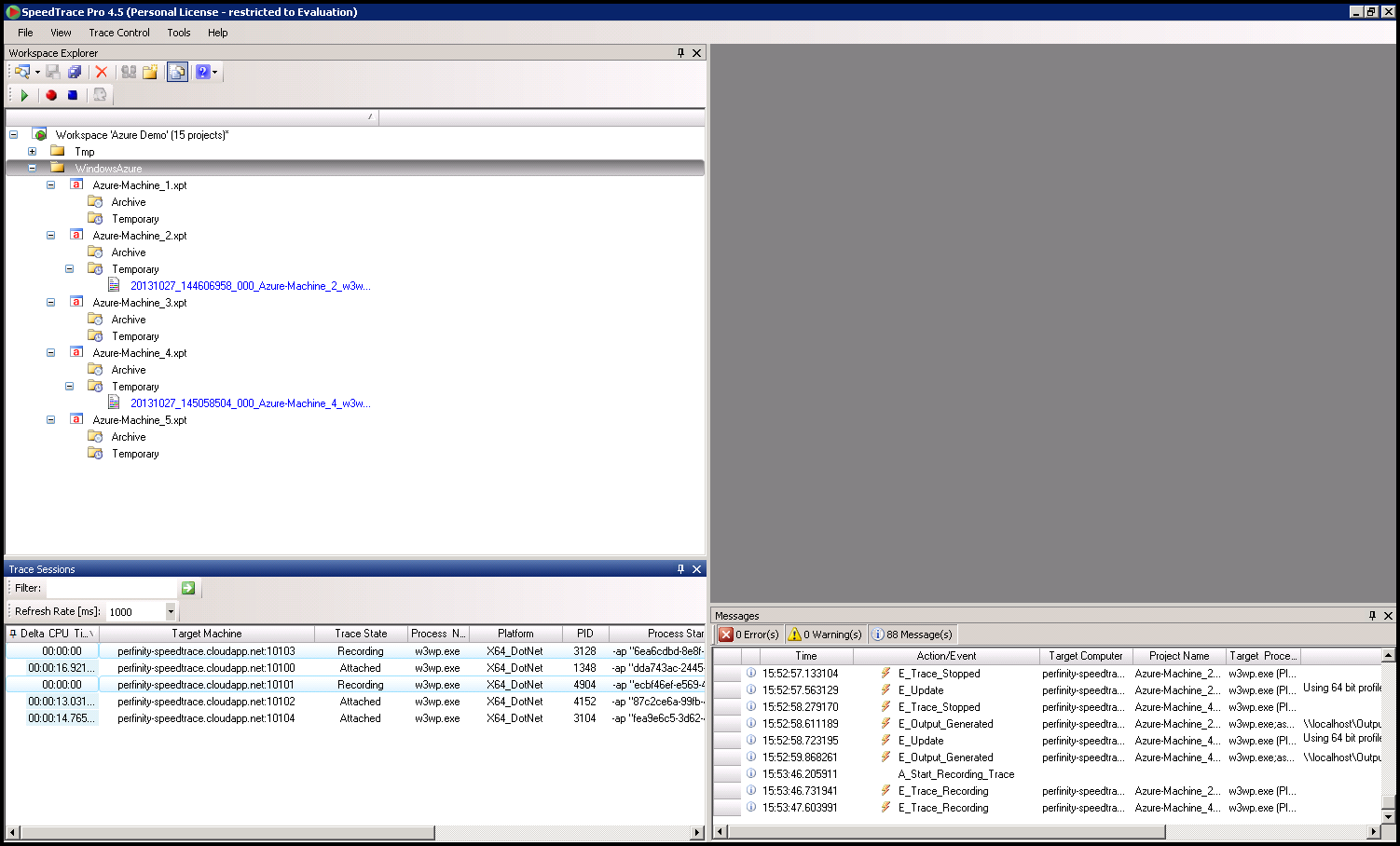

This should have been fixed in April so here is a quick check list of how you approach incremental Refresh Define your Incremental Refresh Policy Error Resource Name and Location Name Need to Match. While in Profile Mode a full run will be executed one step at a time, allowing a full time profile per step.Incremental Refresh came available for Power BI Pro a few months ago but when tested there was am issue. If Profiling is enabled the procedure debugger will show the time taken for each procedure’s executed statements. Target Query WindowĪ separate Query Window can be opened as a Target Query Window to run queries before the debugging starts. If the debugged procedure calls other procedures, the Stack Frames tab shows the path of execution across all external procedures that are called. You can view variables and their values during execution in the Variables tab. Once inside the function call, debugging can continue until the end of the function, or until you click “Step Return”. A new debugger tab is opened for each function call stepped into.

If the current statement is a function, the debugger steps into that function, otherwise it stops at the next statement.

Step Into executes the current statement and then stops at the next statement. SQL Server Debugger – Toggle Breakpoints Step Into

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed